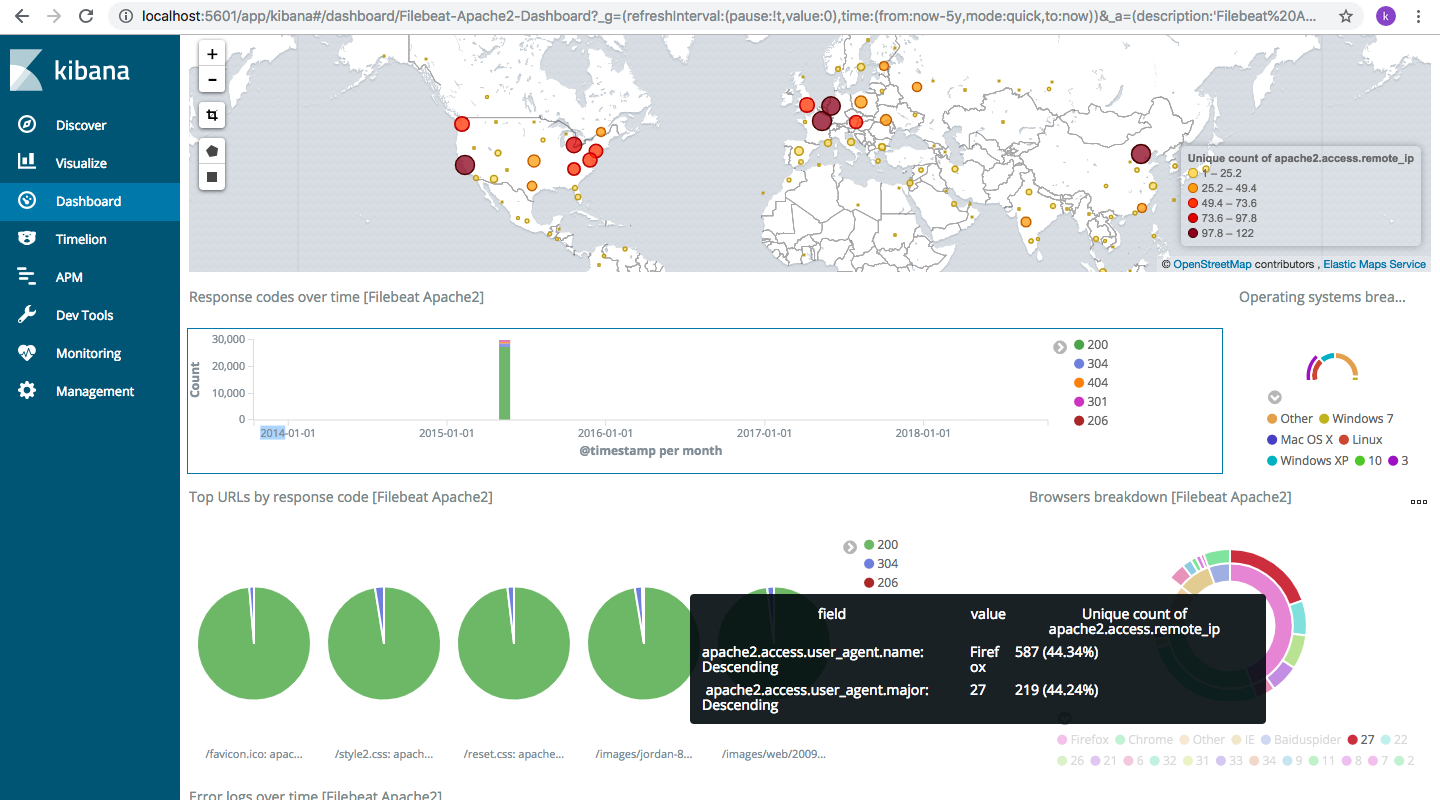

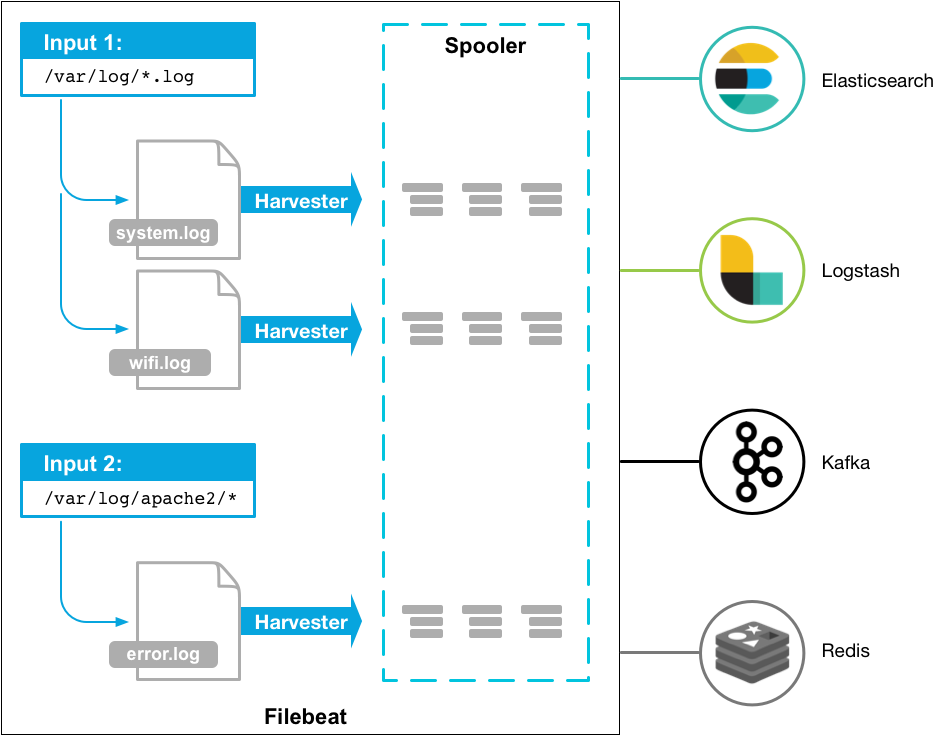

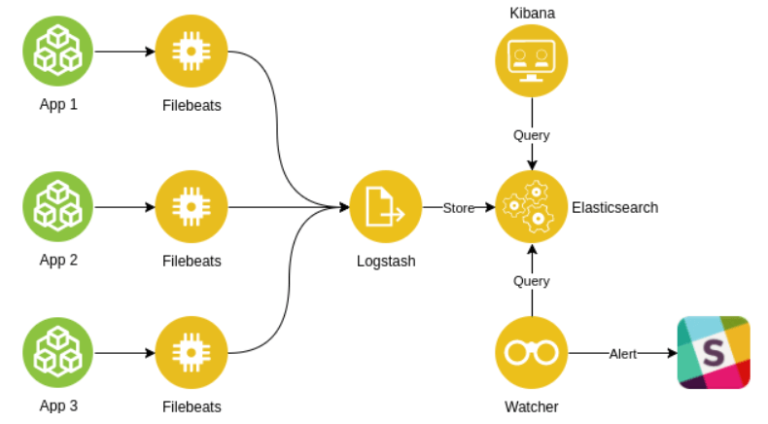

It uses the filebeat-* index instead of the logstash-* index so that it can use its own index template and have exclusive control over the data in that index. Please feel free to drop any comments, questions, or suggestions.If you followed the official Filebeat getting started guide and are routing data from Filebeat -> Logstash -> Elasticearch, then the data produced by Filebeat is supposed to be contained in a filebeat-YYYY.MM.dd index. Now we can go to Kibana and visualize the logs being sent from Filebeat. volume="/var/run/docker.sock:/var/run/docker.sock:ro" \ĭ/beats/filebeat:7.9.2 filebeat -e -strict.perms=false volume="/var/lib/docker/containers:/var/lib/docker/containers:ro" \ volume="$(pwd)/:/usr/share/filebeat/filebeat.yml:ro" \ Hosts: '$'įinally, use the following command to mount a volume with the Filebeat container. Now let’s set up the filebeat using the sample configuration file given below – nfig: Replace the field host_ip with the IP address of your host machine and run the command. This command will do that – sudo docker run \ Now to run the Filebeat container, we need to set up the elasticsearch host which is going to receive the shipped logs from filebeat. Use the following command to download the image – sudo docker pull /beats/filebeat:7.9.2 3. Now, we only have to deploy the Filebeat container. For that, we need to know the IP of our virtual machine. We should also be able to access the nginx webpage through our browser. This should get you the following response – You can check if it’s properly deployed or not by using this command on your terminal – Sudo docker run -d -p 8080:80 –name nginx nginx Now, let’s move to our VM and deploy nginx first. Run Nginx and Filebeat as Docker containers on the virtual machine Similarly for Kibana type localhost:5601 in your browser. Just type localhost:9200 to access Elasticsearch. We must now be able to access Elastic Search and Kibana from your browser. The logs of the containers using the command can be checked using – sudo docker-compose logs -f You can check the running containers using – sudo docker ps

This docker-compose file will start the two containers as shown in the following output – Image: /kibana/kibana:7.9.2Ĭopy the above dockerfile and run it with the command – sudo docker-compose up -d cluster.initial_master_nodes=elasticsearch Image: /elasticsearch/elasticsearch:7.9.2 To run Elastic Search and Kibana as docker containers, I’m using docker-compose as follows – version: '2.2' Run Elastic Search and Kibana as Docker containers on the host machine Here, I will only be installing one container for this demo. You can configure Filebeat to collect logs from as many containers as you want. The idea is that the Filebeat container should collect all the logs from all the containers running on the client machine and ship them to Elasticsearch running on the host machine. In this client VM, I will be running Nginx and Filebeat as containers. I’ve also got another ubuntu virtual machine running which I’ve provisioned with Vagrant. I will bind the Elasticsearch and Kibana ports to my host machine so that my Filebeat container can reach both Elasticsearch and Kibana. In this setup, I have an ubuntu host machine running Elasticsearch and Kibana as docker containers.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed